by

Kolento Hou

25 mins read

TL;DR: AI is driving the cost of learning toward zero. But that is precisely what makes education more expensive and more scarce, not more equal.

That is one of the most dangerous moments in the history of education. Not because AI will replace teachers. Not because students will stop learning.

But because education has always had two faces:

One is the face we like to talk about: enlightenment, growth, the joy of intellectual discovery. The other is the face we prefer not to look at directly: education is a system that allocates people to different opportunities, positions, and resources, and the core mechanism of that system has been assessment for five thousand years.

When AI makes output that resembles deep thinking cheap to produce, the entire assessment architecture built around the output begins to crack at its foundation.

To understand what this means, we first need to reckon with a cold truth an economist stated in 1973, and then go back to the beginning, to a school made of clay tablets in 3000 BCE.

In 1973, economist Michael Spence published a paper in the Quarterly Journal of Economics titled Job Market Signaling. He would later share the 2001 Nobel Prize in Economics with George Akerlof and Joseph Stiglitz for this work.

His central argument fit in a single sentence, but it permanently altered how we understand education:

The market value of education does not come entirely from what it teaches. It comes from what it proves about who you are.

In labor markets, employers cannot directly observe a candidate's ability. They face a problem of information asymmetry: the candidate knows their own capabilities; the employer does not. Education steps in as a signal. But for a signal to work, it must be expensive enough that low-ability individuals find it not worth attempting. The moment anyone can easily obtain a credential, that credential loses its screening value.

This explains a paradox that has baffled observers in the internet era: the cost of acquiring knowledge has collapsed to near zero. You can take MIT courses for free, and read papers in any field at no charge. Yet the premium commanded by elite university degrees has not fallen. In some fields, it has risen.

The reason: a decline in the cost of learning is not a decline in the cost of signaling. As more and more people can learn something, the act of learning it carries less and less signal value. A Harvard degree is expensive not only because of what Harvard teaches, but because admission to Harvard is itself an extraordinarily difficult signal to fake — it certifies that at eighteen, you were among the finest minds of your generation.

The history of MOOCs is the most vivid contemporary proof of this logic. In 2012, The New York Times dubbed the year The Year of the MOOC. Coursera, edX, and their peers proclaimed they would disrupt university education. A decade later, competition for admission to Harvard, MIT, and Stanford was fiercer than ever. People used MOOCs to learn things, and then used elite degrees to find jobs.

The market for knowledge and the market for signals are two different markets. MOOCs conquered the knowledge market. They barely scratched the signal market.

With this framework in hand, history becomes clear.

Elite institutions have never derived their value from exclusive access to knowledge.

Education has been solving the same problem across every era: how do you certify, at scale, that a stranger can be trusted?

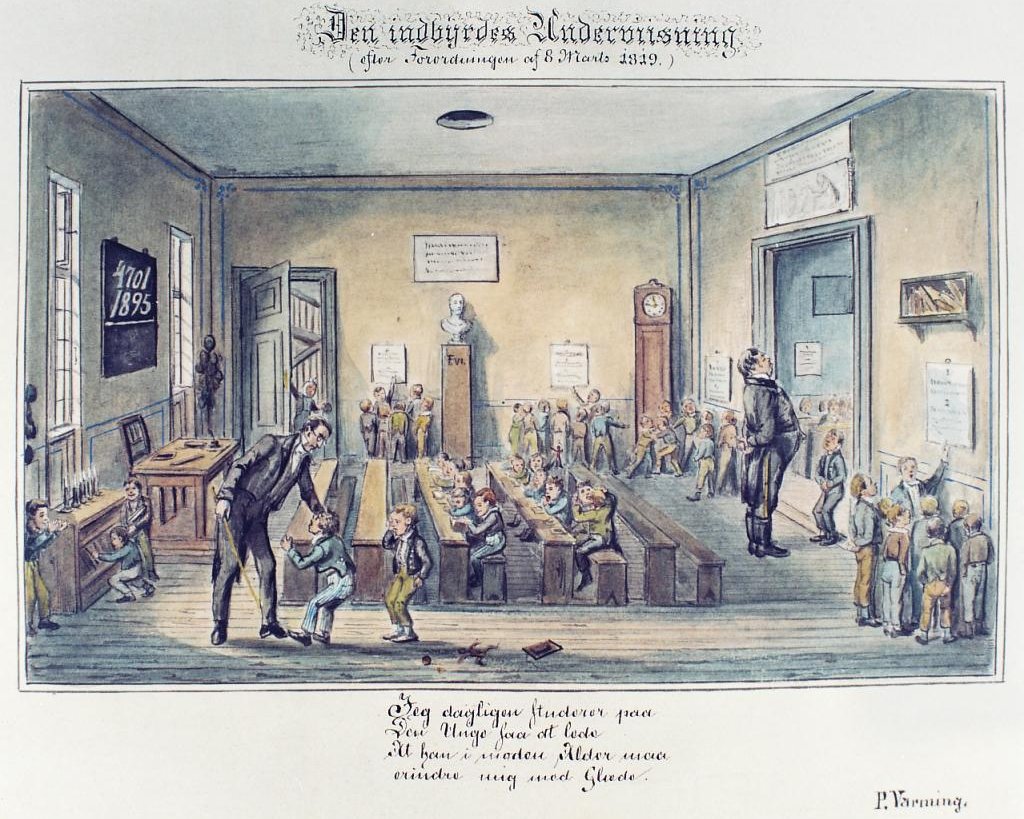

The world's earliest known schools were born in the Sumerian cities of what is now southern Iraq. They were called Edubba, meaning tablet house in Sumerian.

The motivation was entirely concrete: training scribes. By 3000 BCE, Sumerian trade networks stretched hundreds of kilometers. Merchants needed to record transactions; temples needed to register grain stocks; royal administrations needed to track taxes. Cuneiform script was extraordinarily complex — full mastery took twelve years of study, beginning around age seven or eight and ending around twenty.

The first impulse behind formal education was not enlightenment. It was a professional certification. Scribal schools were vocational institutions, designed to produce a scarce class of trained specialists and supply them to temples, palaces, and merchants.

More crucially: the curriculum was determined by what could be assessed. Sign lists, vocabulary tables, mathematical problems, contract templates — all content that could be standardized, taught, and inspected. Capabilities that resisted standardized evaluation had no path into the curriculum.

Curriculum was reverse-engineered from assessment requirements, not the other way around.

This is the oldest paradox in the history of education, and it holds as true today as it did five millennia ago.

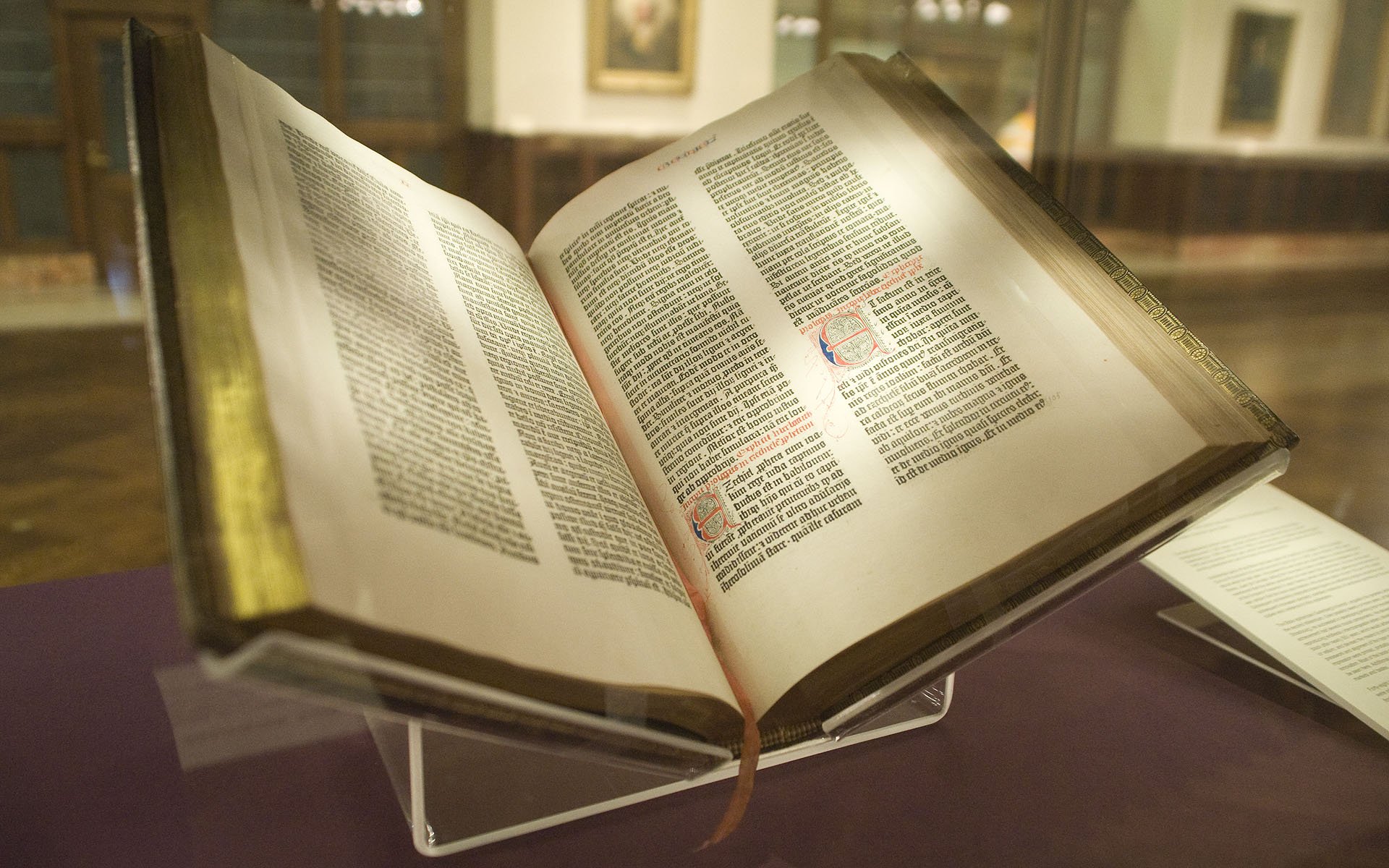

The world's oldest university, the University of Bologna, is traditionally dated to 1088 in northern Italy — though 1088 itself is a 19th-century scholarly convention established for an anniversary celebration. What is certain is that Bologna grew organically out of a student guild movement.

Students from Germany, France, and England flooded into Bologna with no legal standing whatsoever. Landlords could raise rents at will; courts would not hear their cases. So they formed a student guild, universitas scholarium, where universitas originally meant simply a collective with a shared purpose.

The degree was invented in this process. The power to confer degrees initially resided with the bishop's court and later transferred to faculties. The degree was, at its core, a license to practice: the Latin word doctor means teacher, and earning a doctorate meant earning the right to teach. In 1292, Pope Nicholas IV issued a decree confirming that degrees from Bologna and Paris were valid throughout the Christian world.

A university degree was never primarily a proof of what you learned. It was a permission slip confirming you could be trusted to enter a profession.

Structurally identical to the Sumerian scribal school, just larger in scale and more formalized in mechanism.

Notice what's consistent across these two cases, separated by four millennia and two continents. Neither the Sumerian scribal school nor the University of Bologna was primarily designed to make people smarter. Both were designed to solve the same social engineering problem: how does a large, complex institution identify and certify trustworthy specialists at scale?

Assessment wasn't a feature added to education. It was the reason education existed.

The imperial examination system, keju, was established under the Sui dynasty in 605 CE and abolished in 1905, running continuously for 1,300 years.

The problem keju was designed to solve was identical to that facing the Sumerian scribal school and the University of Bologna, only at an incomparably larger scale: how do you identify and elevate trustworthy officials across an empire of hundreds of millions of people at a manageable cost?

Before the Tang dynasty, the answer was lineage and aristocratic networks. The political logic of keju was to break that monopoly, to let imperial power draw talent directly from a nationwide pool of candidates, cultivating loyalty that bypassed the old nobility.

In 2024, a research team led by Fangqi Wen of Ohio State University, with Erik H. Wang and Michael Hout of New York University, published a study in PNAS analyzing 3,640 Tang dynasty epitaphs to trace the relationship between career advancement, family background, and examination performance. Their conclusion: as the keju system matured, the predictive power of aristocratic lineage on career outcomes steadily declined, while the predictive power of examination results steadily rose. This was the largest recorded social experiment in substituting standardized assessment for inherited status, spanning nearly three centuries, at imperial scale — and it worked.

But keju also left an unambiguous lesson: whatever the examination tested, the entire nation taught. The Four Books, the Five Classics, the eight-legged essay — this curriculum was not designed to cultivate the whole person. It was reverse-engineered from examination requirements.

Keju could not assess engineering aptitude, military command, or experimental science, so the supply of talent in those domains was chronically depleted. 19th-century critics pointed to keju as a contributing cause of China's technological lag — the structural blind spot that any single assessment standard inevitably creates.

Keju's influence extended far beyond China. The Northcote-Trevelyon Report, formally published in 1854, was deeply shaped by it. The modern civil service framework that descended from that report reached the United States in 1883 as the Pendleton Civil Service Reform Act — keju's logic had crossed the Pacific and the Atlantic.

Over 1,300 years, standardized testing displaced bloodlines as the engine of social mobility.

In 1862, the British government introduced the Payment by Results scheme: schools received four shillings per attending pupil per year; if a pupil also passed the annual inspection in reading, writing, and arithmetic, the school received an additional eight shillings.

Its architect, Robert Lowe, told Parliament: If it is not cheap, it shall be efficient; if it is not efficient, it shall be cheap. From a fiscal standpoint, this was perfectly rational. From an educational standpoint, it engineered three distortions that persist to this day.

The poet and school inspector Matthew Arnold wrote at the time that teachers no longer thought about how to teach a subject well — they thought about how to get pupils through the inspection. Music, moral formation, and history: subjects that resisted simple inspection quietly vanished from timetables. Though nominally an assessment of students, the scheme in practice used pupils' scores to control teachers' income, stripping teachers of professional autonomy.

Payment by Results was gradually phased out through the 1890s, but it laid bare an iron law:

The moment a metric becomes the valve through which resources flow, the entire system optimizes toward that metric, even at the cost of genuine capability development.

This is not a moral failing. It is systems dynamics.

From Sumer to Bologna, from keju to Payment by Results — this historical arc traces the same cycle: society invents an assessment system, people begin optimizing their behavior for the assessment, the system drifts from its original purpose, and then it iterates.

In 1975, British economist Charles Goodhart gave this cycle a name: once a measure becomes a target, it ceases to be a good measure. The reason is that once people know what metric they are being evaluated on, they optimize for the metric rather than for the underlying capability the metric was designed to reflect.

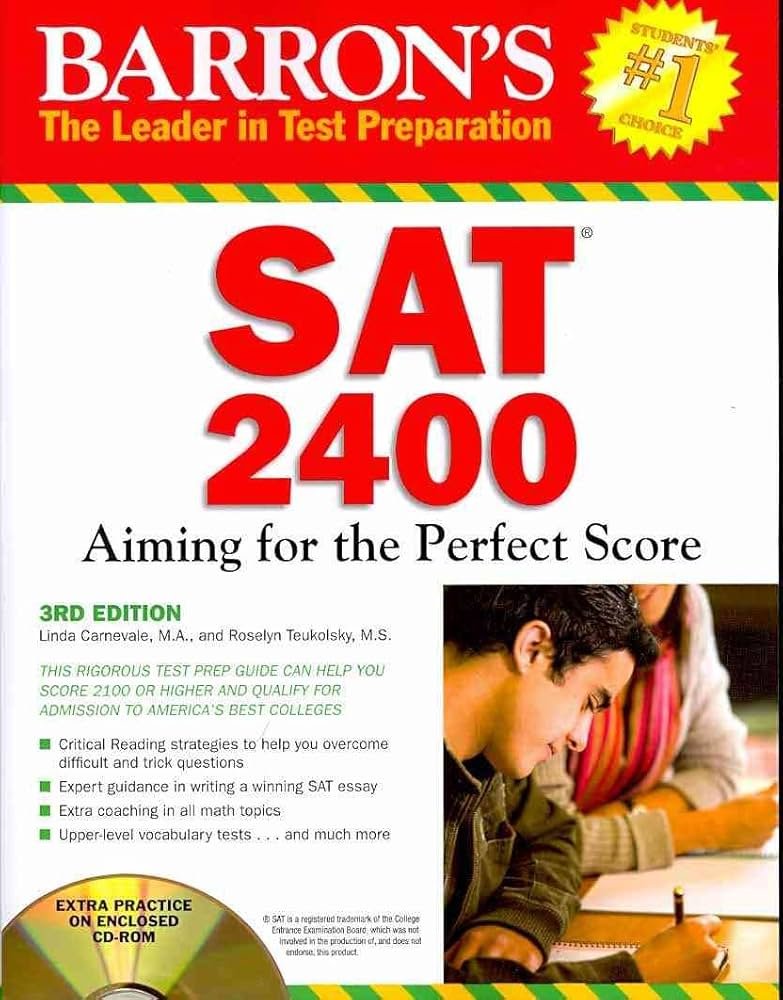

The history of education is a living textbook of Goodhart's Law: keju tested the eight-legged essay, so all of China drilled the eight-legged form; Payment by Results tested reading, writing, and arithmetic, so teachers taught only those three; the SAT tested standardized problems, so a thriving SAT prep industry emerged.

But this cat-and-mouse game historically operated under a speed constraint. Designing a new assessment framework took time. Developing counter-strategies to a new framework also took time. System iteration ran on the scale of years or decades.

AI has shattered that speed constraint.

Design a new writing assessment rubric today; by tomorrow, someone is using AI to generate essays that satisfy it perfectly. Revise the rubric next week; the AI output updates accordingly. This is not a metaphor. It is a literal fact. Any assessment system whose core depends on the form of output produced now faces Goodhart erosion at a speed previously impossible.

Every prior wave of technological disruption followed a common pattern: the printing press made copying cheap, displacing manual transcription; the calculator made arithmetic cheap, displacing mental computation; the search engine made information retrieval cheap, displacing memorization and manual lookup. Each of these technologies displaced a specific production process — you still needed to understand the answer; the path to the answer was shortened.

AI does something categorically different: it makes output that looks like deep thinking cheap.

This is a qualitative leap. An AI-generated essay does not merely get written — in its logical structure, organization of argument, and quality of prose, it can routinely pass virtually every conventional dimension of written assessment. An AI-generated piece of code runs, carries comments, and handles standard edge cases.

Spence's theory tells us: for a signal to function, the cost of faking it must be prohibitively high. The printing press could not fake that you understood a book. The calculator could not fake that you built the right model. The search engine could not fake that you could synthesize information into a judgment. But AI, across a wide range of written assessment formats, can now fake that you completed the cognitive task.

For the first time, AI has severed the act of producing a particular form of knowledge output from the underlying signal it was supposed to carry.

From the Sumerian scribal school to the present, the ability to produce qualified output has been the most reliable proxy for competence, because production itself required the mobilization of genuine cognitive resources. When AI compresses the cost of that proxy to near zero, the entire signal architecture built around output-based assessment begins to lose its foundation.

There is a historical irony that almost everyone has missed.

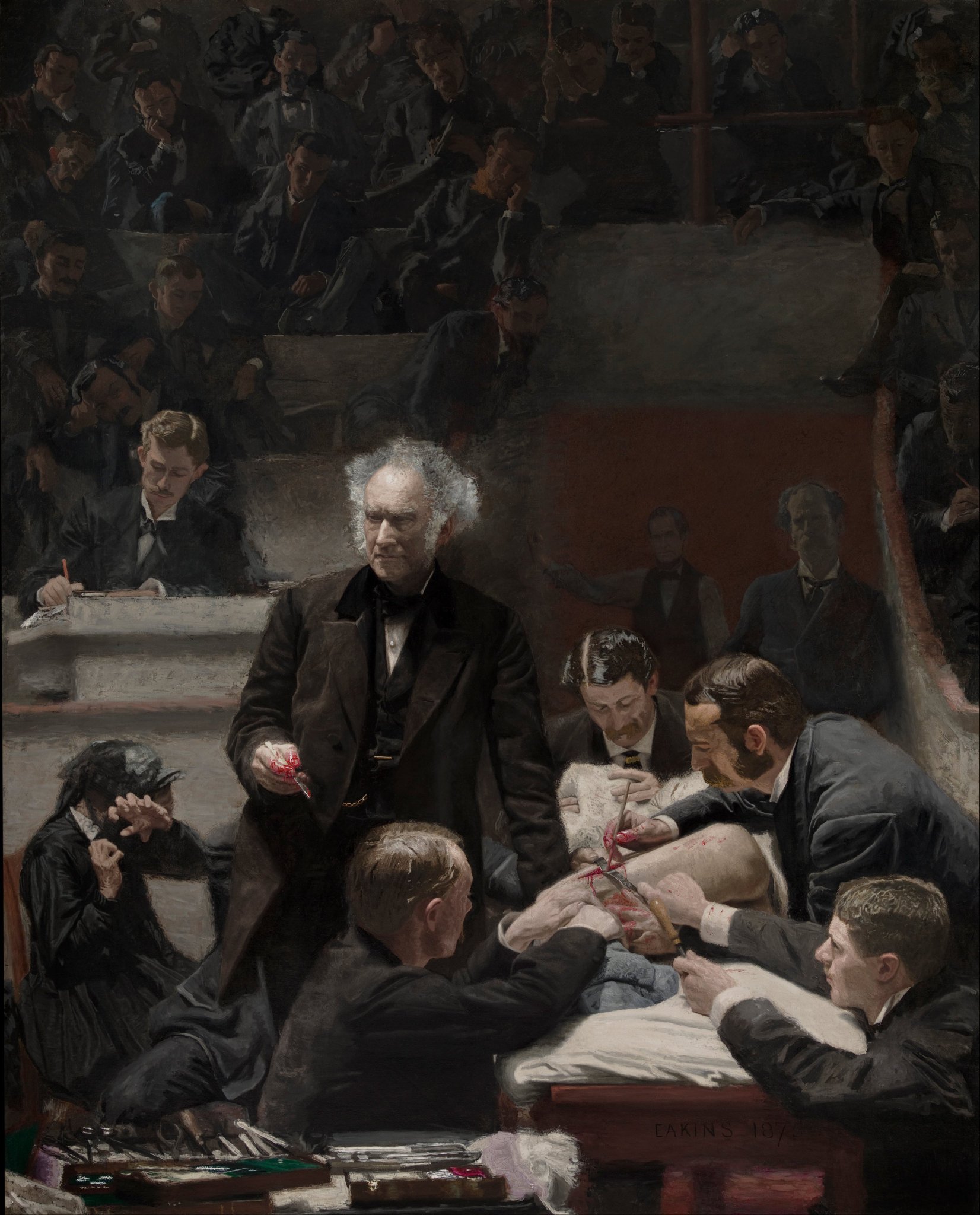

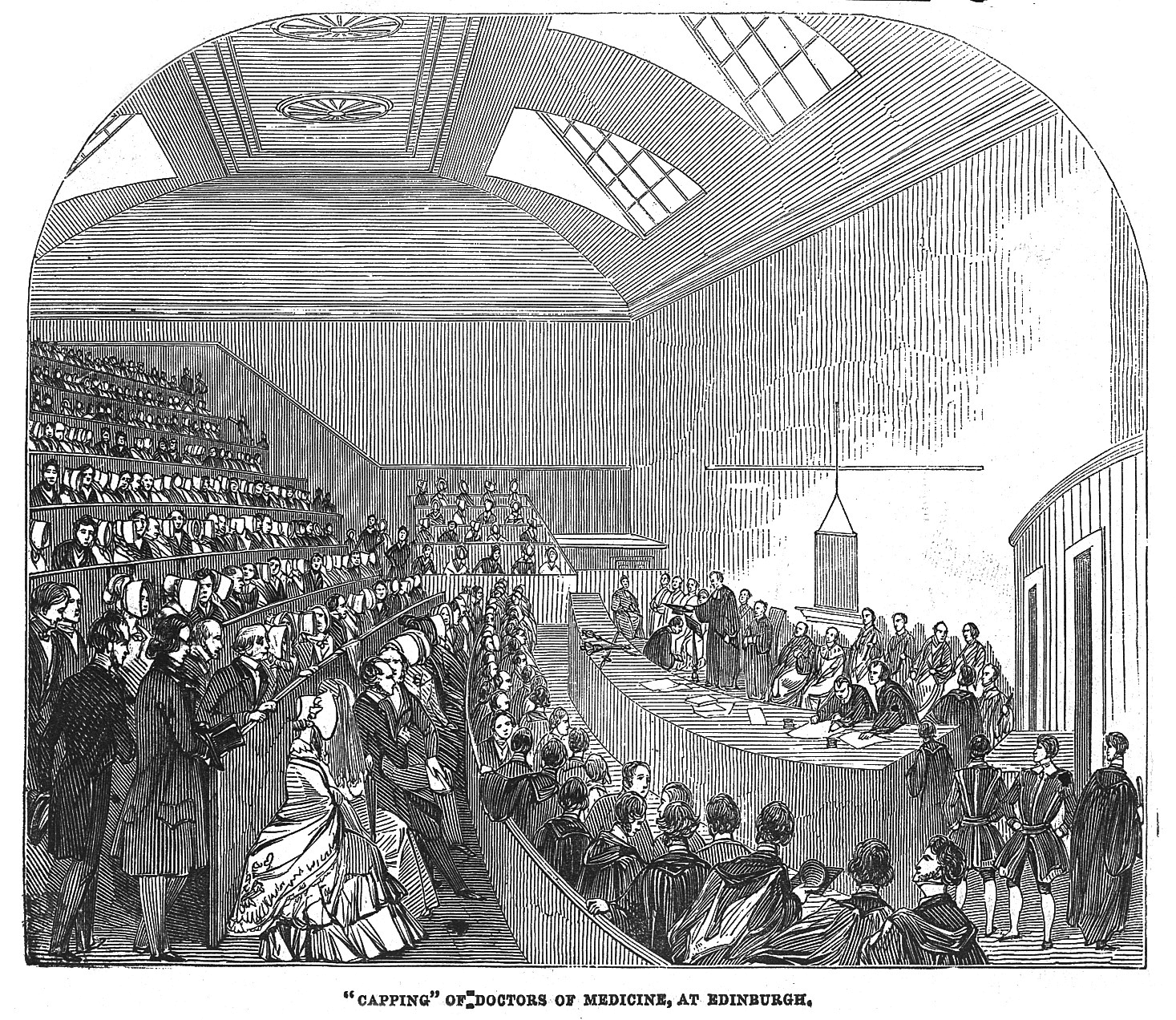

In the early centuries of European university development — Bologna, Oxford, Cambridge — the dominant form of assessment was the viva voce, the oral defense: a student presented their reasoning in real time before examiners, fielded follow-up questions, and could not have all their answers prepared in advance. It was a mechanism that genuinely tested whether the person themselves understood.

This oral format was displaced in the 18th and 19th centuries by large-scale written examinations, for entirely practical reasons: oral exams were expensive, subjective, and impossible to scale. Written tests took their place and evolved through the 20th century into the massive machinery of standardized testing.

Now, AI has stripped written output of its reliability as a signal, and universities around the world have begun to respond. Cambridge has expanded oral assessment across multiple disciplines since 2023. Several Australian universities have introduced post-AI vivas: students submit written work and must then explain the reasoning behind any passage, in real time, before an examiner. This is not a panicked improvisation. It is a structural return: back to some form of real-time, dialogic, non-delegable assessment.

This cycle is not coincidental. It points to a deeper law: assessment systems perpetually chase the dimension of genuine capability that is hardest to fake. In the age of handwriting, the ability to write was the ability itself; in the age of print, comprehension and application became the new core; in the AI age, what will be chased is the ability to exercise real-time judgment under genuine constraints, and to bear personal accountability for the outcome.

The oral exam cannot be delegated — not because AI lacks the intelligence, but because the identity of the person present who is accountable for the result is real.

That relationship of accountability cannot be subcontracted.

At this point, we can answer the central question: when AI makes answers as abundant as air, what becomes scarce?

Not smarter answers. AI's answers are often smart enough. What becomes scarce is:

1. The ability to define the right question.

AI is an extraordinarily powerful answer-generating machine, but it answers the question you give it, not the question that actually matters. In the real world, the hardest part has never been execution. It has always been about diagnosing the problem correctly. Someone who can only use AI to execute tasks and someone who can identify which question is worth asking in the first place will produce outputs that differ by an order of magnitude. This diagnostic capacity cannot be outsourced to AI, because it requires being genuinely embedded in a context — with its stakes, its risks, and its value trade-offs.

2. The ability to make commitments under uncertainty.

AI generates answers, but AI does not sign its name to those answers and face accountability afterward. The core of genuine competence is shifting away from whether you can produce output toward whether you are willing to stand behind it and whether you have the judgment to make that commitment responsibly. This is a non-delegable human function because bearing responsibility requires an agent with real stakes.

3. The ability to recognize when an answer has failed.

AI-generated content typically cannot self-annotate where its conclusions stop holding. The ability to identify the boundaries of AI output, to detect where it begins to hallucinate, to know when it cannot be trusted — this is a metacognitive capacity that requires genuine domain understanding to perform. It is the capacity to verify, not merely to consume.

These three capabilities share a structural feature: they all occur before or after the output, not in the output itself.

The core capability that education must cultivate is shifting from producing high-quality content to directing, judging, verifying, and taking responsibility for content produced by AI.

History tells us that every technological disruption triggers an assessment crisis, and every assessment crisis eventually produces a new assessment paradigm. This time will be no different.

The question is not whether to assess. The question is what to assess. When answers become ambient, the signal of capability must attach itself to a carrier that is harder to fabricate. A growing body of practice is converging on three directions:

Deliverable.

Not an assignment. Not a performance. A genuine output that solved a real problem under real constraints: with a defined objective, defined boundaries, real stakeholders, and results that can be inspected. A portfolio is one form; a working prototype is another; a solution that actually addressed a real client's problem is another. The distinction from conventional assignments is this: it cannot simply ask a student to write about X — it must ask them to create something that solves a specific problem for a specific audience.

Traces.

Not a record of whether AI was used, but of how the problem was defined, where resistance was encountered, which initial hypotheses were discarded, and what the basis of the critical judgments was. This is an auditable decision log. One important caveat: if AI can generate personalized fake traces based on personal background information, this mechanism faces the same erosion risk. Traces must therefore be coupled with real-time oral examination — they cannot stand alone.

Rubrics.

The best antidote to Payment by Results is not to abandon assessment. It is to decompose the assessment from a single metric into a multi-dimensional rubric. Not one score, but separate evaluations of the clarity of problem definition, quality of judgment, risk awareness, capacity for verification, and quality of orchestration in working with AI — with transparent scoring criteria.

The logic of these three together: learning happens in the process; capability is verified in the deliverable; the signal is forged in the multi-dimensional rubric.

A five-thousand-year retrospective:

The Sumerian scribal school, emerging around 3000 BCE, trained trustworthy information processors for imperial administration — assessment was its core function from the moment of its birth. The University of Bologna, conventionally dated to 1088, invented the degree as a guild: a license of entry, not merely a proof of knowledge, but a professional passport. The imperial examination system, beginning in 605 CE, demonstrated over 1,300 years that large-scale standardized assessment could displace hereditary status, but could not escape overfitting — it shaped the content of national education and left enormous gaps in technical capability. Payment by Results in 1862 exposed assessment's iron law: the moment it becomes a resource valve, systems inevitably narrow toward what can be quantified. Spence's signal theory in 1973 revealed education's most uncomfortable truth: its market value comes not entirely from what it teaches, but from what it certifies about who you are.

And now AI, by driving the cost of output toward zero, has pushed the signal problem to a prominence it has never had before — and for the first time, what it threatens is not the signal value of any particular skill, but the foundation of the entire architecture built around written output as proof of capability.

This historical arc points to a sober conclusion:

Education's core function will not disappear because of AI — but its form will change.

As technology dramatically compresses the production and transmission of knowledge, education's genuinely scarce function becomes clearer: forging credible signals of competence, so that society can confidently entrust high-value opportunities to people who are genuinely capable of carrying them out responsibly.

This is not education's corruption. This is what education has always done, and what it must now be more honest about.

Plato's Academy embodied a different ideal — learning purely for the sake of understanding, without credentials or certification. Beautiful, but fragile. It endured for centuries, then was closed by an administrative decree. The Sumerian scribal school, the University of Bologna, the imperial examination system — these institutions, built around assessment and certification as their load-bearing structure, lasted far longer and left far deeper marks, because they solved a real social problem: in a large-scale society where people cannot all know one another personally, how do you build trust at scale?

In the AI era, that question is more urgent than it has ever been. When anyone can generate impressive output, the fact that a person can actually do it becomes harder to prove than at any point in history, and more valuable than ever before.

Education remains the most important forge for casting that proof.

It simply has to learn to cast a new kind of metal.